Starting with version 2.7, Sipwise C5 uses a dedicated network.yml file to configure the IP addresses of the system. The reason for this is to be able to access all IPs of all nodes for all services from any particular node in case of a distributed system on one hand, and in order to be able the generate /etc/network/interfaces automatically for all nodes based on this central configuration file.

The basic structure of the file looks like this:

hosts:

self:

role:

- proxy

- lb

- mgmt

interfaces:

- eth0

- lo

eth0:

ip: 192.168.51.213

netmask: 255.255.255.0

type:

- sip_ext

- rtp_ext

- web_ext

- web_int

lo:

ip: 127.0.0.1

netmask: 255.255.255.0

type:

- sip_int

- ha_intSome more complete, sample configuration is shown in network.yml Overview section of the handbook.

The file contains all configuration parameters under the main key: hosts

In Sipwise C5 systems all hosts of the system are defined, and the names are the actual host names instead of self, like this:

On a PRO it would look like:

hosts:

sp1:

peer: sp2

role: ...

interfaces: ...

sp2:

peer: sp1

role: ...

interfaces: ...On a CARRIER it would look like:

hosts:

web01a:

peer: web01b

role: ...

interfaces: ...

web01b:

peer: web01a

role: ...

interfaces: ...There are three different main sections for a host in the config file, which are role, interfaces and the actual interface definitions.

role: The role setting is an array defining which logical roles a node will act as. Possible entries for this setting are:

- mgmt: This entry means the host is acting as management node for the platform. In a Sipwise C5 system this option must always be set. The management node exposes the admin and CSC panels to the users and the APIs to external applications and is used to export CDRs. Please note: on a CARRIER this is only set on the nodes of the management pairs. This node is also the source of the installations of other nodes via iPXE and has the approx service (apt proxy).

- lb: This entry means the host is acting as SIP load-balancer for the platform. In a Sipwise C5 system this option must always be set. Please note: on a CARRIER this is only set on the nodes of the lb pairs. The SIP load-balancer acts as an ingress and egress point for all SIP traffic to and from the platform.

- proxy: This entry means the host is acting as SIP proxy for the platform. In a Sipwise C5 system this option must always be set. Please note: on a CARRIER this is only set on the nodes of the proxy pairs. The SIP proxy acts as registrar, proxy and application server and media relay, and is responsible for providing the features for all subscribers provisioned on it.

- db: This entry means the host is acting as the database node for the platform. In a Sipwise C5 system this option must always be set. Please note: on a CARRIER this is only set on the nodes of the db pairs. The database node exposes the MySQL and Redis databases.

- rtp: This entry means the host is acting as the RTP relay node for the platform. In a Sipwise C5 system this option must always be set. Please note: on a CARRIER this is only set on the nodes of the RTP relay pairs. The RTP relay node runs the rtpengine Sipwise C5 component.

- li: (CARRIER-only) This entry means the host is acting as the interface towards a lawful interception service provider.

-

interfaces: The interfaces setting is an array defining all interface names

in the system. The actual interface details are set in the actual interface settings

below. It typically includes

lo, eth0, eth1physical and a number of virtual interfaces, like:bond0, vlanXXX - <interface name>: After the interfaces are defined in the interfaces setting, each of those interfaces needs to be specified as a separate set of parameters.

Additional main parameters of a node:

- dbnode: the sequence number (unique ID) of the node in the database cluster;

- peer: the hostname of the peer node within the pair of nodes (e.g. "sp2" for sp1 host on a PRO; "web01b" for web01a host on a CARRIER). The purpose of that: each node knows its companion for providing high availability, data replication etc.

- status: one of online, offline, inactive. inactive means that the node is up but is not ready to work in the cluster (installing process). offline means that the node is not reachable. online is a normal working node.

hwaddr: MAC address of the interfacecaution On a CARRIER this must be filled in properly for the interface that is used as type

ha_int, because the value of it will be used during the boot process of the installation of nodes via iPXE, if PXE-boot is enabled.-

ip: IPv4 address of the node -

v6ip: IPv6 address of the node; optional -

netmask: IPv4 netmask -

v6netmask: IPv6 netmask -

gateway: IPv4 gateway address -

v6gateway: IPv6 gateway address -

shared_ip: shared IPv4 address of the pair of nodes; this is a list of addresses -

shared_v6ip: shared IPv6 address of the pair of nodes; optional; this is a list of addresses -

shared_ip_only: Boolean switch (yes or no) to enable usage with just a shared floating IPv4 address, without a static IPv4 address configured. To prevent accidental misconfiguration, this usage mode must be explicitly enabled. This usage mode is disallowed for certain interface types (e.g. ssh, mon, or ha). -

shared_v6ip_only: same as above, but for IPv6 -

advertised_ip: the IP address that is used in SIP messages when Sipwise C5 system is behind NAT/SBC. An example of such a deployment is Amazon AMI, where the server doesn’t have a public IP, so load-balancer component of Sipwise C5 needs to know what his public domain is (→advertised_ip). type: type of services that the node provides; these are usually the VLANs defined for a particular Sipwise C5 system.info You can assign a type only once per node.

Available types are:

-

api_int: internal, API-based communication interface. It is used for the internal communication of such services as faxserver, fraud detection and others. aux_ext: interface for potentially insecure external components like remote system log collection service.info For example the CloudPBX module can use it to provide time services and remote logging facilities to end customer devices. The type aux_ext is assigned to lo interface by default. If it is needed to expose this type to the public, it is recommended to assign the type aux_ext to a separate VLAN interface to be able to limit or even block the incoming traffic easily via firewalling in case of emergency, like a (D)DoS attack on external services.

-

mon_ext: remote monitoring interface (e.g. SNMP) -

rtp_ext: main (external) interface for media traffic -

sip_ext: main (external) interface for SIP signalling traffic between NGCP and other SIP endpoints -

sip_ext_incoming: additional, optional interface for incoming SIP signalling traffic -

sip_int: internal SIP interface used by Sipwise C5 components (lb, proxy, etc.) -

ssh_ext: command line (SSH) remote access interface -

ssh_int: command line (SSH) internal NGCP access interface -

web_ext: interface for web-based or API-based provisioning and administration -

web_int: interface for the administrator’s web panel, his API and generic internal API communication -

li_int: used for LI (Lawful Interception) traffic routing -

ha_int: HA (High Availability) communication interface between the services -

boot_int: the default VLAN used to install nodes via PXE-boot method -

rtp_int: internal interface for handling RTP traffic among Sipwise C5 nodes that may reside in greater distance from each other, like in case of a specialised NGCP configuration with centralized web / DB / proxy nodes and distributed LB nodes. (Please refer to Cluster Sets section for further details)

-

| info | |

Please note that, apart from the standard ones described so far, there might be other types defined for a particular Sipwise C5 system. |

-

vlan_raw_device: tells which physical interface is used by the particular VLAN -

post_up: routes can be defined here (interface-based routing), for example:

post_up: - route add -host 1.2.3.4 gw 192.168.1.1 dev vlan70 - route add -net 10.11.12.0/21 gw 192.168.1.2 dev vlan300 - route del -host 1.2.3.4 gw 192.168.1.1 dev vlan70 - route del -net 10.11.12.0/21 gw 192.168.1.2 dev vlan300

-

bond_XY: specific to "bond0" interface only; these contain Ethernet bonding properties

You have a typical deployment now and you are good to go, however you may need to do extra configuration depending on the devices you are using and functionality you want to achieve.

The file /etc/hosts is generated by a template, containing entries for basic

host configuration (localhost and basic IPv4/IPv6), and the IPs of other nodes

in PRO/CARRIER configurations.

To add extra entries in this file, it can be done in several ways:

-

etc_hosts_global_extra_entriesat the global level, added to all hosts -

etc_hosts_global_extra_entriesat the host level, which overrides the global one if for some reason the whole content is undesired for a particular host (e.g. to have some but not all of the "default" global entries) -

etc_hosts_local_extra_entriesat the host level, which are added only to the hosts where this entry is present, if for some reason it is desired to have extra entries only visible in some subset of the hosts

The behaviour is the same in all cases, to append the entries directly to

/etc/hosts.

Example of both in a configuration file:

---

hosts_common:

etc_hosts_global_extra_entries:

- 10.100.1.1 server-1 server-1.internal.example.com

- 10.100.1.2 server-2 server-2.internal.example.com

hosts:

db01b:

etc_hosts_local_extra_entries:

- 127.0.1.1 local-alias-1.db01b

- 127.0.2.1 local-alias-2.db01b

- 172.30.52.180 db01b.example.com

...

web01a:

etc_hosts_local_extra_entries:

- 127.0.1.1 local-alias-1.web01a

- 127.0.2.1 local-alias-2.web01a

- 172.30.52.168 web01a.example.com

etc_hosts_global_extra_entries:

- 10.100.1.1 server-1 server-1.internal.example.com

...With this, the additional output in /etc/hosts for db01b will be:

# local extra entries for host 'db01b' 127.0.1.1 local-alias-1.db01b 127.0.2.1 local-alias-2.db01b 172.30.52.180 db01b.example.com # global extra entries 10.100.1.1 server-1 server-1.internal.example.com 10.100.2.1 server-2 server-2.internal.example.com

and in web01a:

# local extra entries for host 'web01a' 127.0.1.1 local-alias-1.web01a 127.0.2.1 local-alias-2.web01a 172.30.52.168 web01a.example.com # global extra entries overridden for host 'web01a' 10.100.1.1 server-1 server-1.internal.example.com

By default, the load-balancer listens on the UDP and TCP ports 5060 (kamailio→lb→port) and TLS port 5061 (kamailio→lb→tls→port). If you need to setup one or more extra SIP listening ports or IP addresses in addition to those standard ports, please edit the kamailio→lb→extra_sockets option in your /etc/ngcp-config/config.yml file.

The correct format consists of a label and value like this:

extra_sockets:

port_5064: udp:10.15.20.108:5064

test: udp:10.15.20.108:6060The label is shown in the outbound_socket peer preference (if you want to route calls to the specific peer out via specific socket); the value must contain a transport specification as in example above (udp, tcp or tls). After adding execute ngcpcfg apply:

ngcpcfg apply 'added extra socket' ngcpcfg push all

The direction of communication through this SIP extra socket is incoming+outgoing. The Sipwise C5 will answer the incoming client registrations and other methods sent to the extra socket. For such incoming communication no configuration is needed. For the outgoing communication the new socket must be selected in the outbound_socket peer preference. For more details read the next section Section 12.2.3, “Extra SIP and RTP Sockets” that covers peer configuration for SIP and RTP in greater detail.

| important | |

In this section you have just added an extra SIP socket. RTP traffic will still use your rtp_ext IP address. |

If you want to use an additional interface (with a different IP address) for SIP signalling and RTP traffic you need to add your new interface in the /etc/network/interfaces file. Also the interface must be declared in /etc/ngcp-config/network.yml.

Suppose we need to add a new SIP socket and a new RTP socket on VLAN 100. You can use the ngcp-network tool for adding interfaces without having to manually edit this file: On a PRO system that would be:

ngcp-network --set-interface=eth0.100 --host=sp1 --ip=auto --netmask=auto --hwaddr=auto --type=sip_ext_incoming --type=rtp_int_100 ngcp-network --set-interface=eth0.100 --host=sp2 --ip=auto --netmask=auto --hwaddr=auto --type=sip_ext_incoming --type=rtp_int_100

On a CARRIER system that would be:

ngcp-network --set-interface=eth0.100 --host=lb01a --ip=auto --netmask=auto --hwaddr=auto --type=sip_ext_incoming ngcp-network --set-interface=eth0.100 --host=lb01b --ip=auto --netmask=auto --hwaddr=auto --type=sip_ext_incoming ngcp-network --set-interface=eth0.100 --host=prx01a --ip=auto --netmask=auto --hwaddr=auto --type=rtp_int_100 ngcp-network --set-interface=eth0.100 --host=prx01b --ip=auto --netmask=auto --hwaddr=auto --type=rtp_int_100

On a PRO system the generated files would look like:

sp1:

..

..

eth0.100:

hwaddr: ff:ff:ff:ff:ff:ff

ip: 192.168.1.2

netmask: 255.255.255.0

shared_ip:

- 192.168.1.3

shared_v6ip: ~

type:

- sip_ext_incoming

- rtp_int_100

..

..

interfaces:

- lo

- eth0

- eth0.100

- eth1

..

..

sp2:

..

..

eth0.100:

hwaddr: ff:ff:ff:ff:ff:ff

ip: 192.168.1.4

netmask: 255.255.255.0

shared_ip:

- 192.168.1.3

shared_v6ip: ~

type:

- sip_ext_incoming

- rtp_int_100

..

..

interfaces:

- lo

- eth0

- eth0.100

- eth1On a CARRIER system the generated files would look like:

lb01a:

..

..

eth0.100:

hwaddr: ff:ff:ff:ff:ff:ff

ip: 192.168.1.2

netmask: 255.255.255.0

shared_ip:

- 192.168.1.3

shared_v6ip: ~

type:

- sip_ext_incoming

..

..

interfaces:

- lo

- eth0

- eth0.100

- eth1

..

..

prx01a:

..

..

eth0.100:

hwaddr: ff:ff:ff:ff:ff:ff

ip: 192.168.1.20

netmask: 255.255.255.0

shared_ip:

- 192.168.1.30

shared_v6ip: ~

type:

- rtp_int_100

..

..

interfaces:

- lo

- eth0

- eth0.100

- eth1

..

..

lb01b:

..

..

eth0.100:

hwaddr: ff:ff:ff:ff:ff:ff

ip: 192.168.1.4

netmask: 255.255.255.0

shared_ip:

- 192.168.1.3

shared_v6ip: ~

type:

- sip_ext_incoming

..

..

interfaces:

- lo

- eth0

- eth0.100

- eth1

..

..

prx01b:

..

..

eth0.100:

hwaddr: ff:ff:ff:ff:ff:ff

ip: 192.168.1.40

netmask: 255.255.255.0

shared_ip:

- 192.168.1.30

shared_v6ip: ~

type:

- rtp_int_100

..

..

interfaces:

- lo

- eth0

- eth0.100

- eth1As you can see from the above example, extra SIP interfaces must have type sip_ext_incoming. While sip_ext should be listed only once per host, there can be multiple sip_ext_incoming interfaces. The direction of communication through this SIP interface is incoming only. The Sipwise C5 will answer the incoming client registrations and other methods sent to this address and remember the interfaces used for clients' registrations to be able to send incoming calls to him from the same interface.

In order to use the interface for the outbound SIP communication it is necessary to add it to extra_sockets section in /etc/ngcp-config/config.yml and select in the outbound_socket peer preference.

So if using the above example we want to use the vlan100 IP as source interface towards a peer, the corresponding section may look like the following:

extra_sockets:

port_5064: udp:10.15.20.108:5064

test: udp:10.15.20.108:6060

int_100: udp:192.168.1.3:5060The changes have to be applied:

ngcpcfg apply 'added extra SIP and RTP socket' ngcpcfg push all

After applying the changes, a new SIP socket will listen on IP 192.168.1.3

on a CARRIER in the lb01 node and this socket can now

be used as source socket to send SIP messages to your peer for example. In

above example we used label int_100. So the new label "int_100" is now

shown in the outbound_socket peer preference.

Also, RTP socket is now listening on 192.168.1.3 on a PRO, and

192.168.1.30 on a CARRIER prx01 node and you can choose the new

RTP socket to use by setting parameter rtp_interface to the Label "int_100"

in your Domain/Subscriber/Peer preferences.

Normally, each interface that was configured with a type that starts with rtp_ can be selected individually as RTP interface in the Domain/Subscriber/Peer preferences. For example, if the interface types rtp_ext, rtp_int, and rtp_int_100 have been configured, the Domain/Subscriber/Peer preferences will allow the RTP interfaces to be selected as either ext, int, or int_100 in addition to "default".

The same rtp_ interface type can be configured on multiple interfaces. If this is the case, and if ICE (Interactive Connectivity Establishment) is enabled for a Domain/Subscriber/Peer, it is possible to use ICE to automatically negotiate which interface should be used for RTP communications. ICE must be supported by the remote client for this to work.

For example, rtp_ext can be configured on multiple interfaces like so (abbreviated):

..

..

eth0.100:

type:

- rtp_ext

..

eth0.150:

type:

- rtp_ext

..

eth1:

type:

- rtp_ext

..

..In this example, the RTP interface ext will be available for selection in the Domain/Subscriber/Peer preferences. If selected and if ICE is enabled, the addresses of all three interfaces will be presented to the remote client, and ICE will be used to negotiate which one of them will be used for communications. This can be useful in multi-homed environments, or when remote clients are on private networks.

If the RTP port range configured via the config.yml keys rtpproxy.minport and rtpproxy.maxport

is not sufficient to handle all concurrent calls, it is possible to load-balance the RTP ports across

multiple interfaces. This is useful if the RTP proxy runs out of ports and if not enough additional

ports are available.

To enable this, multiple interfaces with different addresses must be configured, and interface types of the format rtp_NAME:SUFFIX must be assigned to them. For example, if the RTP interface named ext should be load-balanced across three interfaces, they can be configured like so (abbreviated):

..

..

eth0.100:

type:

- rtp_ext:1

..

eth0.150:

type:

- rtp_ext:2

..

eth1:

type:

- rtp_ext:3

..

..In this example, all three given RTP interface types will be available for selection in the Domain/Subscriber/Peer preferences individually (as ext:1 and so on), but in addition to that, an interface named just ext will also be available for selection. If ext is selected, only one of the three RTP interfaces will be selected in a round-robin fashion, thus increasing the number of available RTP ports threefold. The round-robin algorithm only selects an interface if it actually has RTP ports available.

In a Sipwise C5 CARRIER system it is possible to have geographically distributed nodes in the same logical Sipwise C5 unit. Such a configuration typcally involves the following elements:

- centralised management (web), database (db) and proxy (prx) nodes: these provide all higher level functionality, like system administration, subscriber registration, call routing, etc.

- distributed load balancer (lb) nodes: these serve as SBCs for the whole Sipwise C5 and handle SIP and RTP traffic to / from SIP endpoints (e.g. subscribers); and they also communicate with the central elements of Sipwise C5 (e.g. proxy nodes)

In case of such an Sipwise C5 node configuration it is possible to define cluster sets which are collections of Sipwise C5 nodes providing the load balancer functionality.

Cluster sets can be assigned to subscriber domains or SIP peers and will determine the route of SIP and RTP traffic for those sets of SIP endpoints:

- For SIP peers the selected nodes will be used to send outbound SIP traffic through

- For both SIP peers and subscriber domains the selected nodes will provide RTP relay functionality (the rtpengine Sipwise C5 component will run on those nodes)

There are 2 places in NGCP’s main configuration files where an entry for cluster sets must be inserted:

Declaration of cluster sets

This happens in

/etc/ngcp-config/config.ymlfile, see an example below:cluster_sets: default: dispatcher_id: 50 default_set: default poland: dispatcher_id: 51 type: distributedConfiguration entries are:

-

<label>: an arbitrary label of the cluster set; in the above example we have 2

of them:

defaultandpoland; the cluster setdefaultis always defined, even if cluster sets are not used - <label>.dispatcher_id: a unique, numeric value that identifies a particular cluster set

- default_set: selects the default cluster set

-

type: the type of cluster set; can be

centralordistributed

-

<label>: an arbitrary label of the cluster set; in the above example we have 2

of them:

Assignment of cluster sets

This happens in

/etc/ngcp-config/network.ymlfile, see an example below:. . lb03a: . . vlan792: cluster_sets: - poland hwaddr: 00:00:00:00:00:00 ip: 172.30.61.37 netmask: 255.255.255.240 shared_ip: 172.30.61.36 type: - sip_int vlan_raw_device: bond0In the network configuration file typically the load balancer (lb) nodes are assigned to cluster sets. More precisely: network interfaces of load balancer nodes that have

sip_inttype — that are used for SIP signalling and NGCP’s internal rtpengine command protocol — are assigned to cluster sets.In order to do such an assignment a cluster set’s label has to be added to the

cluster_setsparameter, which is a list.

After modifying network configuration with cluster sets, the new configuration must be applied in the usual way:

> ngcpcfg apply 'Added cluster sets' > ngcpcfg push all

For both SIP peers and subscriber domains you can select the cluster set labels

predefined in config.yml file.

SIP peers: In order to select a particular cluster set for a SIP peer you have to navigate to Peerings → select the peering group → select the peering server → Preferences → NAT and Media Flow Control and then Edit

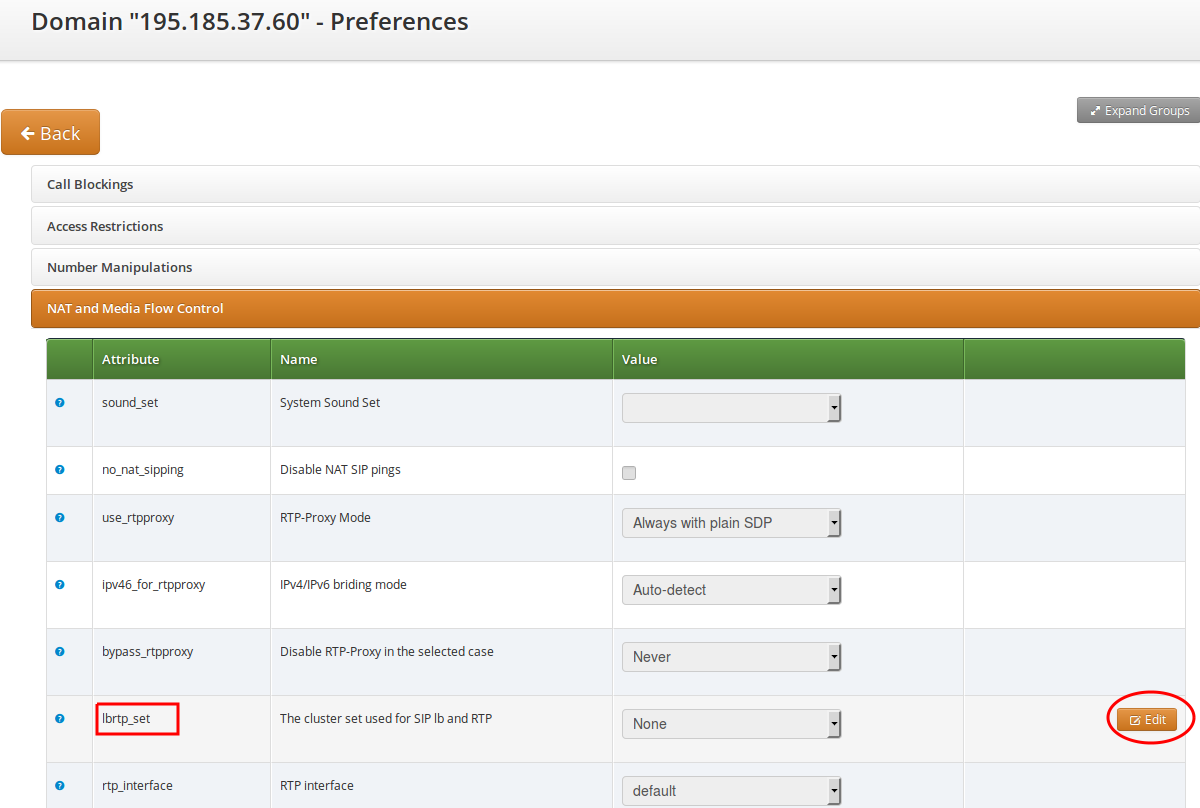

lbrtp_setparameter.Domains: In order to select a particular cluster set for a domain you have to navigate to Domains → select the domain → Preferences → NAT and Media Flow Control and then Edit

lbrtp_setparameter.